Data Normalisation standardises raw imagery and derived products into a consistent, interoperable, and cloud-optimised format suitable for large-scale analysis and automation.

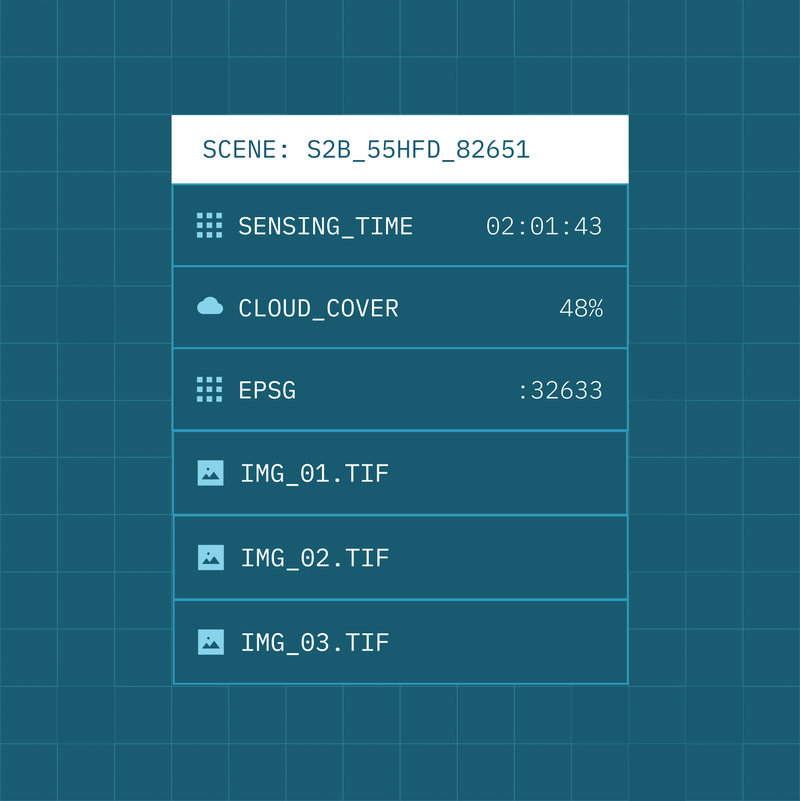

All imagery is normalised into a common STAC-based structure, with raster data automatically converted to Cloud Optimised GeoTIFF (COG) for efficient, scalable access in cloud and hybrid environments. Quality layers and annotations are generated and standardised as part of the normalisation process, ensuring downstream analytics and machine learning pipelines operate on reliable, comparable inputs.

By enforcing consistent structure, formats, and quality controls at ingestion, Data Normalisation removes friction between sensors, suppliers, and analytics tools, allowing organisations to onboard new data sources quickly and operate at scale.

- Access imagery through cloud-native formats optimised for performance and scale

- Eliminate format and structure inconsistencies across sensors and providers

- Ensure data is analysis-ready on ingestion

- Enable reliable analytics and AI pipelines using standardised inputs

- Accelerate onboarding of new sensors, missions, and data sources

Resources

Further resources and documentation for the normalisation standards and pipeline are coming soon